Decoding the Tech Topic: Data Platforms

Databricks Spark jobs optimization techniques: Shuffle partition technique

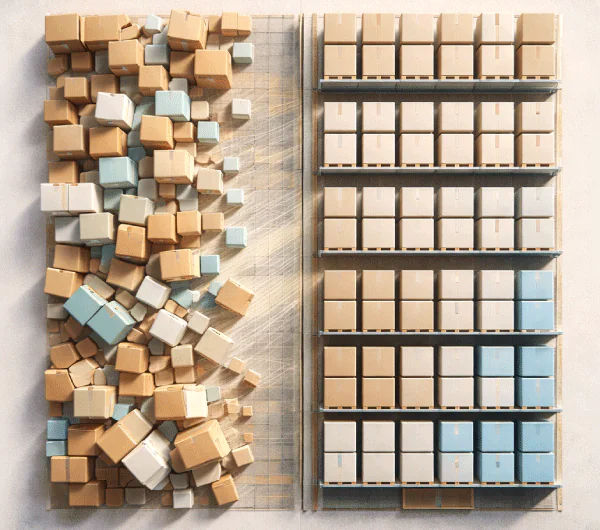

Optimizing Spark performance requires precise tuning of shuffle partitions to balance workload distribution and avoid bottlenecks. Poor partitioning leads to memory issues or excessive task overhead, slowing pipelines. Techniques like Adaptive Query Execution and skew handling improve efficiency by dynamically adjusting partitions. In real-world use, such as a modern lakehouse implementation, these optimizations enable faster processing, real-time insights, and scalable data operations, turning complex data workloads into efficient, high-performance systems.

Read More

How Advisory Services Turn AI Hype into Real Business Results

An effective AI implementation strategy bridges the gap between experimentation and real business value by aligning models with specific industry needs, data quality, and operational workflows. Through AI transformation consulting, organizations move beyond generic solutions to deploy scalable, interpretable, and domain-specific systems. From underwriting and demand forecasting to GenAI-driven retrieval, this approach ensures measurable outcomes, builds stakeholder trust, and turns AI investments into sustained competitive advantage.

Read More